Y-O-Y? (Part II)

The first part of this was planed as the only part, however to keep things concise I decided to split it so here are some more in depth observations on way I am investing my time into this project.

Implementing the features

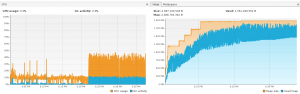

Profiling the game while the CPU was under heavy load (due to ongoing rendering) has shown some interesting issues. The max memory of heap is around 2 GB and most of it is also used. When about 2/3s are used this seems to have an effect on increased GC activity:

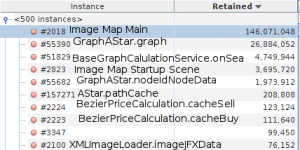

An investigation of the used memory has further shown which objects require the most memory. The result were not surprising, but the did show that there might be room for improvement:

The largest objects that cannot be collected by the GC are Maps. There are two large maps caching the images and then several maps that belong the to context of path finding and other cached calculation.

The largest objects that cannot be collected by the GC are Maps. There are two large maps caching the images and then several maps that belong the to context of path finding and other cached calculation.

The question with the image cache then arose if that is necessary, as Images used in the UI are also referenced from the scene graph and the large scene images only have one instance in the scene graph other than the cache. The idea behind the caching was that images that are reused in the UI do not need to have multiple Image instances. This lead me to the conclusion to not cache the images as a general rule but only those that are specially marked to be cached.

The other map structure have something peculiar in common: their values are primitive types. As it is not possible to store a primitive type in a Map it is boxed into it’s object representation which adds additional memory overhead. Therefore I asked the question about alternative approaches. It turns out that there are quite some libraries around that address this issue. I have detailed the findings in a separate blog.

Providing the Artwork

The fire

The fire animation is solely based on BlenderGuru’s tutorial on this topic. However when you take a look at the fire animation you can see that it is far more complex than the simple fire from the tutorial. The most obvious part is that has multiple fires instead of one. This is basically the only big difference. There are no ambers as well, but the trick to get multiple fires in the animation with pretty high resolution is to have multiple sources of fire and only one smoke domain. With one domain per source the bake time is about 20 hours, while with one single domain it is cut down to 4 hours. The other settings that are different are mainly attributed to the different scale of the scene.

The celebration

The celebration animation picks up the fire theme although at a smaller level. The main focus were the animation of various characters. The characters used here are in part old ones from the guild scene which were fixed up with proper grouping of the model and armature as described in this video. The main achievement however was the creation of new characters through Bastioni Lab. This tool allows to create really high quality characters, that basically do not really need further custom painted faces, just the adding of hair and beards is normally sufficient. In the resulting scene however these details are not even visible. As these characters need proper clothes, which is easy with Bastioni Lab, as it adjusts clothes from a base mesh, which really allows to reuse the same cloth models over again. Therefore I looked into how to properly model cloth as opposed to just copying part of the characters body an adjust it slightly. The point that I can still make major improvements is in combination with rigging: At the moment my process is to remove the underlying mesh of the character to avoid issues where the body intersects with the clothing. The most promising approach that I have seen so far is this. However as Bastioni Lab is still in development and there are some points for clothing and hair on the roadmap, this might also provide a solution.

The second issue I am not yet really happy with is the rigging. Both tools (MakeHuman and Bastioni Lab) will generate a basic rig, but if I need a rig that allows switching between FK and IK, you are stuck. As far as I have seen Rigify could solve this problem, however I am unsure how well this would integrate Bastioni Lab.

Rendering

While rendering the celebration I experienced several crashes due to segmentation fault which were not reliable reproducible. Either they happened at the beginning of the rendering after everything is computed and the first samples are being rendered or at the end when the image should be saved. At least the second kind I could no longer produce with the update to Blender 2.78c. As the rendering requires up to 4 GB memory I think this may be somehow related. Anyway this lead me to try rendering the animation from the command line and I figure out the rendering of a frame is a bit faster (~5-10%):

./blender -d -b <path to blend file> -o <output directory> -s <start frame> -e <end frame> -a

[…] release I also posted two articles on all the stuff learned from the development: Y-O-Y part I and part II. There are also three more blog posts on some specific development topics, that are not restricted […]

Could I have an application form? how often can you take 600 mg ibuprofen Andy Levander, counsel for Corzine, said: “This is an unprecedented lawsuit based on meritless allegations that Mr Corzine failed to supervise an experienced back-office professional who was located in a different city and who did not report to Mr Corzine or even to anyone who reported to Mr Corzine.